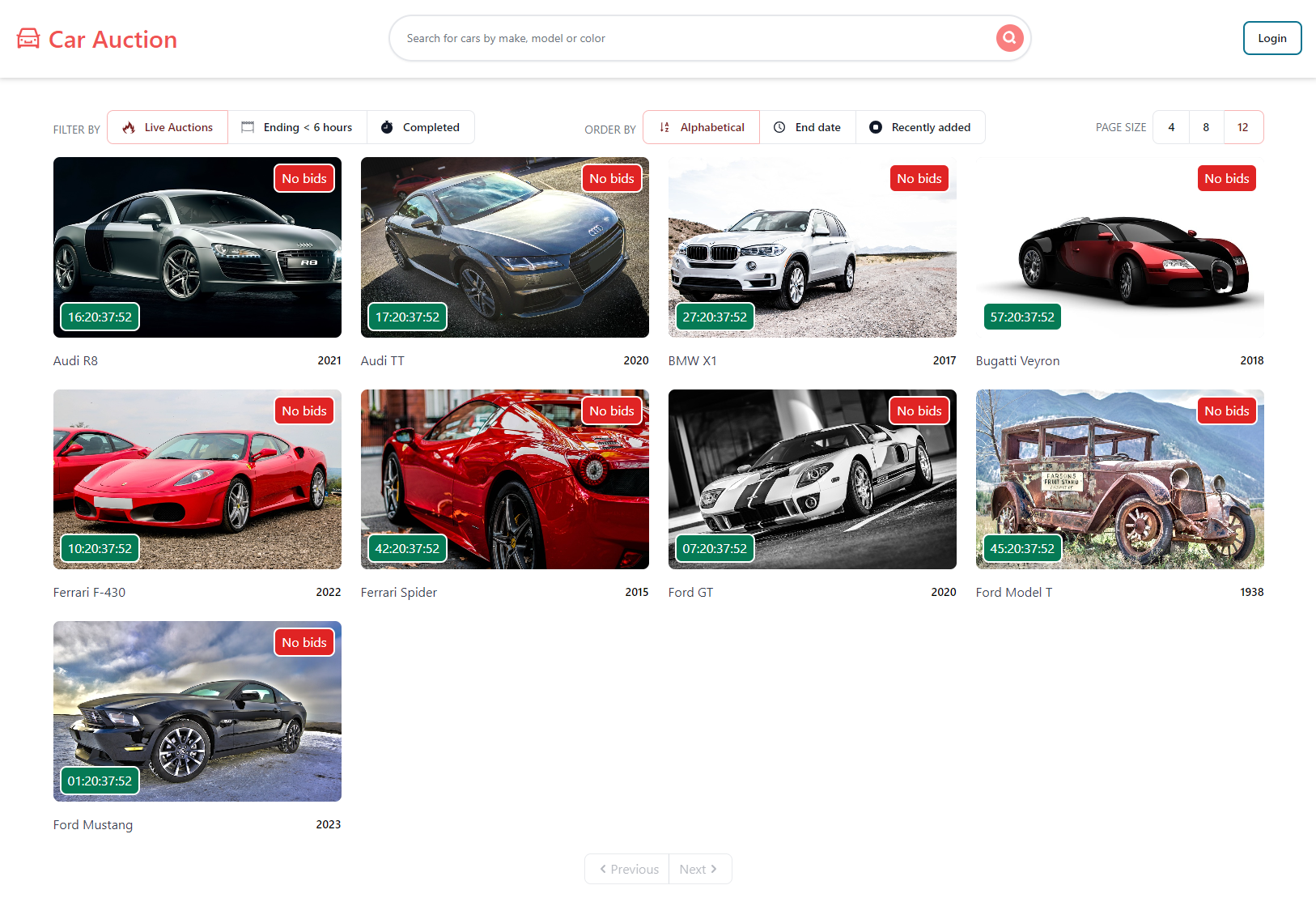

Distributed Car Auction Platform

Architected 5-6 independent microservices using .NET Core with PostgreSQL, MongoDB, RabbitMQ, and Kafka for async messaging and event-driven communication. Implemented production-grade auth (OAuth 2.0, IdentityServer) and real-time updates via SignalR; deployed on Kubernetes with API gateway and reverse proxy (YARP), and a Next.js frontend.